The Local-First LLM Wiki

Open-source knowledge graph builder and RAG knowledge base built on Andrej Karpathy's LLM Wiki pattern. Start with one command, then grow into graph navigation, review queues, context packs, task history, and automation as the vault gets more useful. Everything starts as local files: raw sources, a markdown wiki, and graph state you can inspect. Part of the SwarmClaw network.

Quick Start

Install globally and start compiling knowledge in minutes.

$ # Install the CLI

$ npm install -g @swarmvaultai/cli

$ # Build a vault from a repo or docs folder

$ swarmvault quickstart ./your-repo

$ # Let SwarmVault choose the next step

$ swarmvault next

$ # Then inspect and ask

$ swarmvault query "What are the key concepts?"

$ swarmvault doctor

$ swarmvault graph serve

Local workspace

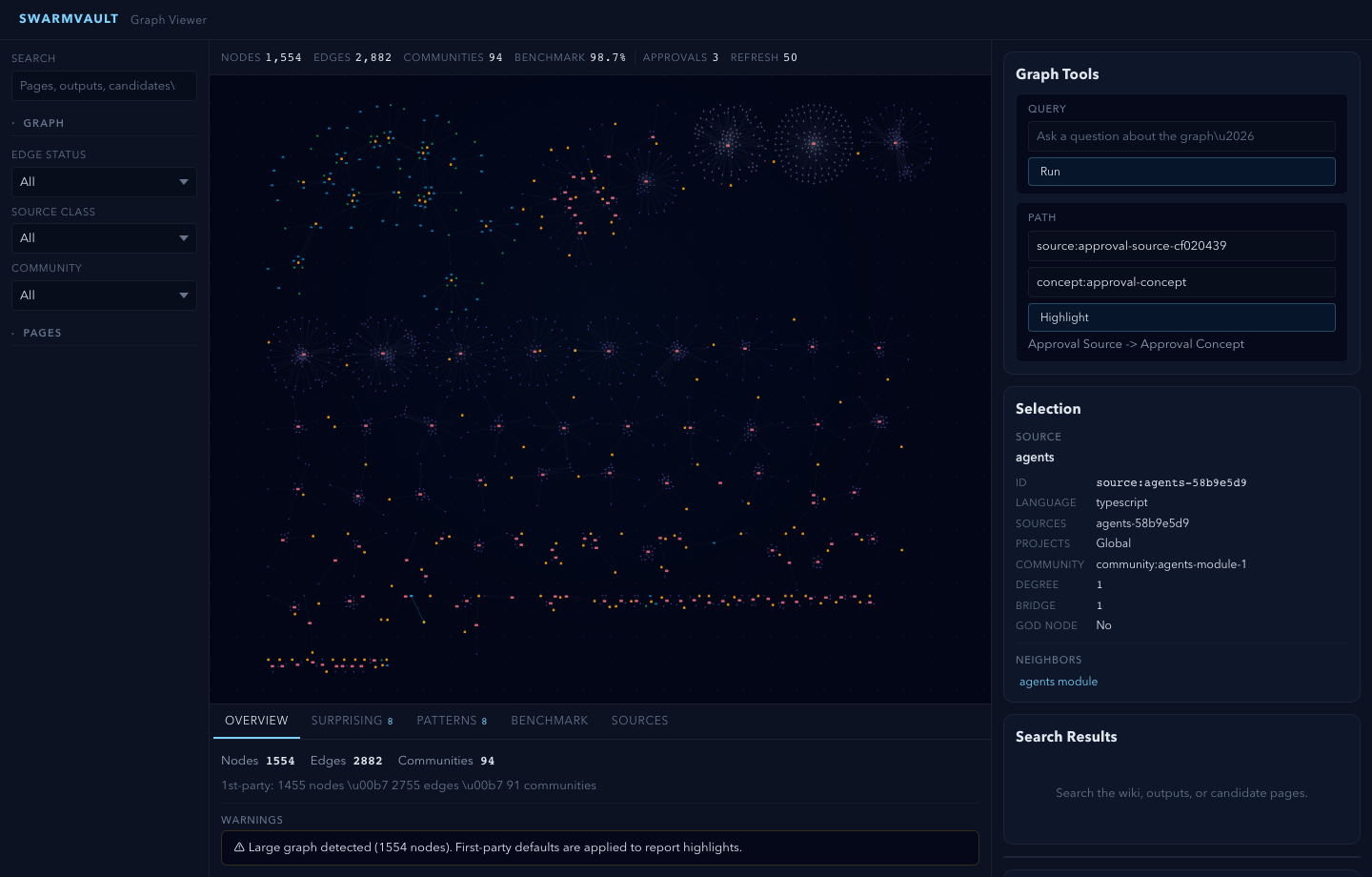

See the graph, approvals, candidates, and search in one screen

The installed viewer is not a mock dashboard. It reads the same local files the CLI writes: `graph.json`, `report.json`, approvals, candidates, and saved outputs. That keeps the product explainable instead of hiding the real artifact model behind another app.

Graph-first reports stay clickable, benchmark freshness is visible, and review queues stay in the same local surface as search and preview.

The screenshot here comes from a real browser gut-check against the installed package path, not a design mock.

How It Works

Three layers (raw sources, wiki, schema) and a workflow that compounds. Unlike ephemeral RAG, SwarmVault builds a persistent knowledge artifact that gets richer with every source you add.

Step 01

Shape

Start with swarmvault.schema.md so the vault has explicit naming rules, categories, and grounding expectations.

Step 02

Ingest

Feed in books, datasets, slide decks, screenshots, files, or URLs. SwarmVault extracts text and creates immutable source manifests with content hashes.

Step 03

Compile

The engine analyzes sources locally, applies the vault schema, and can optionally use model providers for richer synthesis while building a knowledge graph with provenance.

Step 04

Compound

Ask questions, save useful answers, run exploration loops, and expose the vault to agents over MCP.

A local-first RAG knowledge base, knowledge graph, and agent memory in one

From raw sources to structured knowledge — SwarmVault is the open-source LLM Wiki that handles the full compilation lifecycle.

Local-First

Everything runs on your machine. No cloud required. Start fully offline with the built-in heuristic workflow - no API keys needed.

Schema-Guided

Each vault ships with swarmvault.schema.md, so compile and query behavior can follow domain-specific naming, categories, and grounding rules.

Graph-Based Wiki

Compile sources into a knowledge graph with nodes, edges, provenance tracking, and auto-generated Markdown wiki pages.

Optional Model Providers

Add OpenAI, Anthropic, Gemini, Ollama, or any OpenAI-compatible API when you want richer synthesis or capability-specific upgrades.

CLI-Powered

Init, ingest, inbox import, compile, query, tasks, retrieval, explore, lint, watch, MCP, and graph serve - all from the command line.

Anti-Drift Linting

Detects stale sources, conflicting claims, missing citations, and coverage gaps. Deep lint can also attach external evidence.

Agent-Ready

Auto-install rules for Claude, Cursor, and Codex, record task ledgers, or expose the vault directly through MCP for compatible clients.

Worked examples

Start from real example vaults, not abstract feature lists

Example

Code repo

Ingest a real repo tree, compile module pages, resolve local imports, and inspect the graph report plus benchmark artifacts.

Example

Research capture

Use `swarmvault add` for arXiv, DOI, and article URLs, then compile them into durable source pages with normalized metadata.

Example

Review loop

Stage compile changes, inspect approval bundles, save reports, and promote candidates instead of trusting silent rewrites.

Example

Book reading

Build a fan wiki chapter by chapter. Character pages, theme pages, and plot threads that compound as you read.

Example

Research deep-dive

Ingest papers and articles on a focused topic, build an evolving thesis, and let lint surface contradictions across sources.

Example

Personal Memex

Journals, health logs, podcast notes, and goals compiled into a structured self-knowledge vault with cross-referenced themes.

Works with

Build your local-first LLM Wiki in minutes

Download the desktop app or install the open-source CLI and start compiling your RAG knowledge base, knowledge graph, and AI second brain. No API keys required to get started.